RESEARCH

Tree Bandits for Generative Bayes

Sean O’Hagan, Jungeum Kim, and Veronika Rockova (2024), JASA (minor revision)

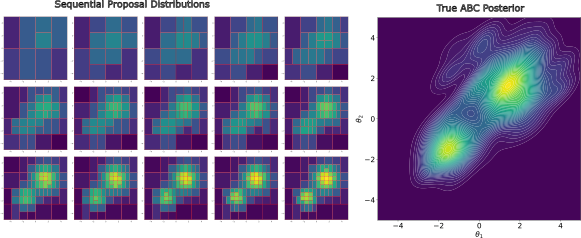

We develop a self-aware framework that can be simplified to a binary bandit problem. This framework treats ABC acceptance as a reward. Each arm is a box from sequential recursive partitioning classifiers on the ABC lookup table. This new bandit approach accelerates ABC rejection! (draft, slide)

Jungeum Kim, Percy Zhai, and Veronika Rockova (2024), AISTATS

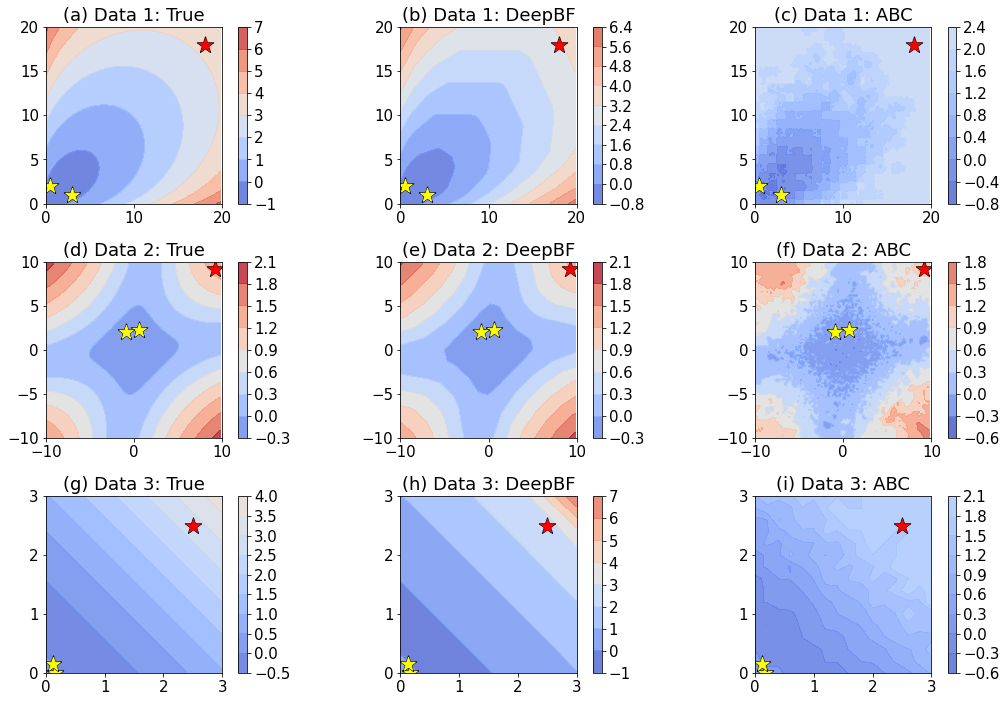

The vector quantile notion can be used to obtain a generative Bayesian posterior sampler-! We have features like automatic summary statistics and support shrinkage contraction of our posterior approximation) as in the image. We utilize Monge-Kantorovich depth in multivariate quantiles to directly sample from Bayesian credible sets. (draft, slide, code)

Adaptive Uncertainty Quantification for Generative AI

Jungeum Kim*, Sean O’Hagan*, and Veronika Rockova (2024)

Our empirical results show that conformalizing ChatGPT predictions using our adaptive approach (1) yields tighter sets (compared to split-conformal) for most test observations, and (2) is able to communicate “I do not know” through wide sets in cases when the language model is likely to “hallucinate” its query response. Our paper is not meant to encourage users to rely on ChatGPT for predictions. It is only meant to illustrate that if one were to do so, proper accounting for uncertainty is needed. (draft, slide, code)

On Mixing Rates for Bayesian CART

Jungeum Kim and Veronika Rockova (2023), EJS

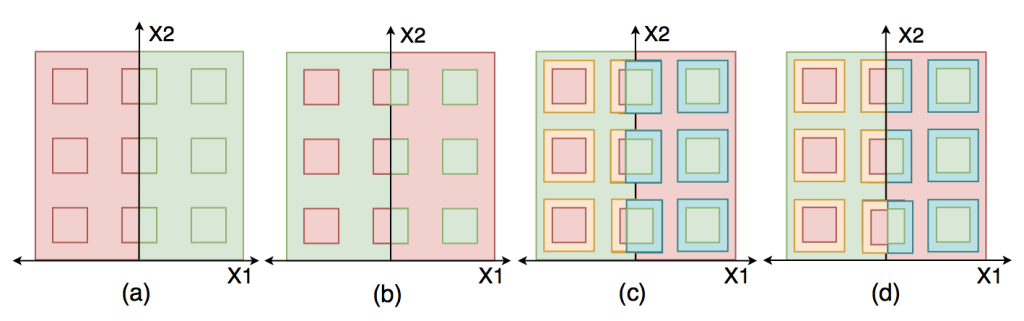

We found that Bayesian CART can mix slow or fast depending on the intrinsic data structure. Instead of the standard local moves, we propose to use new and more aggressive movements, called twiggy movements. The new movements remove such data structural dependency. (draft, slide, video)

Jungeum Kim and Xiao Wang (2023), TMLR

Manifold learning for visualization purposes by constructing and preserving locally adaptive global distances. Our algorithm shows a clear progression from global formation (with random initialization) to local details in a single optimization process!(draft, code)

Robust Sensible Adversarial Learning of Deep Neural Networks for Image Classification

Jungeum Kim and Xiao Wang (2022), AOAS

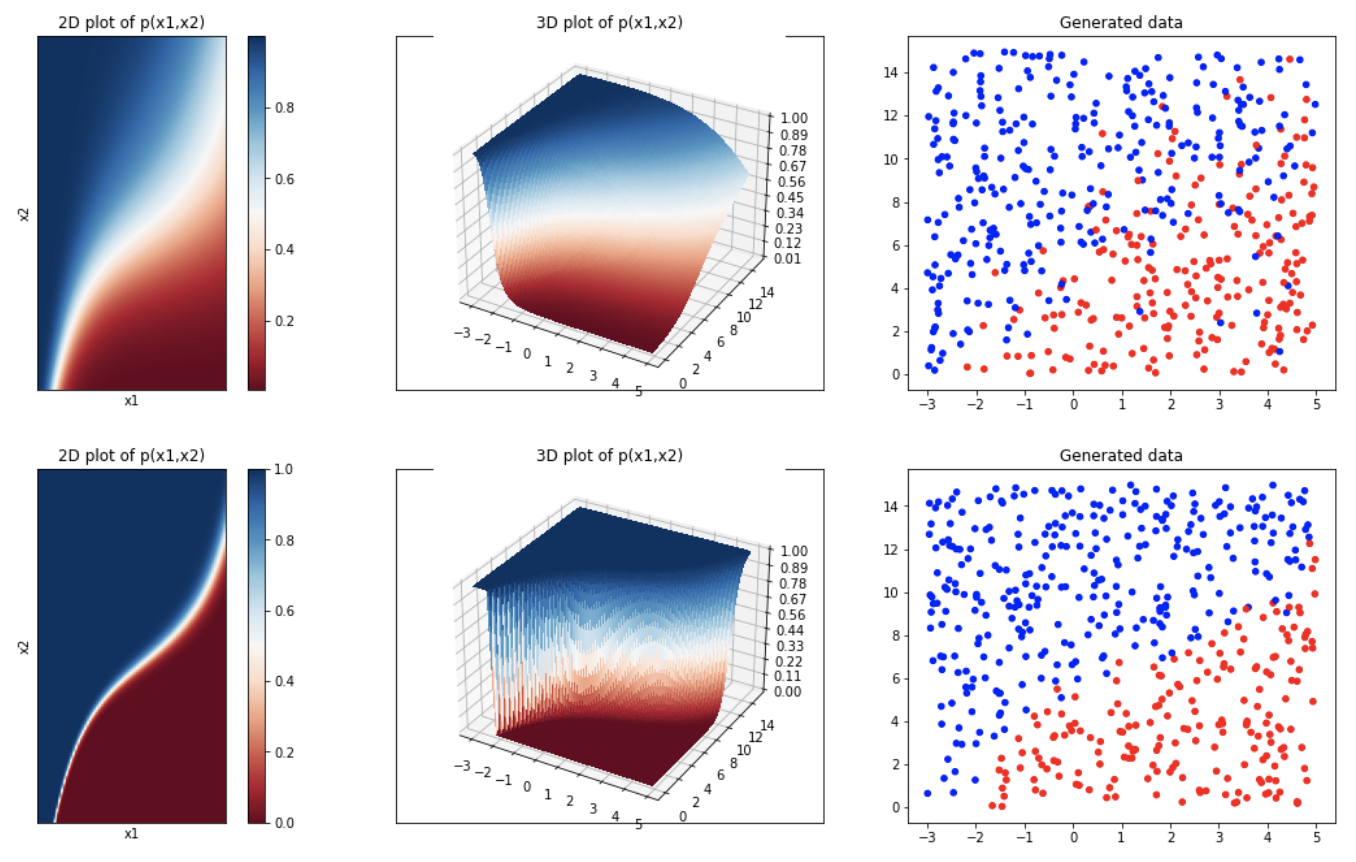

The Bayes classifier in fact can be the most robust classifier. Therefore, adversarial training for robust classification with deep neural networks should still aim to learn the Bayes classifier-!

Purdue University Graduate School PhD Thesis.